Proven Ways How SaaS Founders Validate Product-Market Fit with AI

When it comes to building a successful SaaS product, knowing whether your offering truly resonates with your target market is essential. That’s where understanding how SaaS founders validate product-market fit with AI becomes genuinely useful.

In my experience, using AI tools to interpret user data, analyze customer behavior, and forecast market trends can accelerate the validation process and reduce costly missteps.

But here’s the surprising part — many founders still rely on gut feelings or outdated methods, missing out on AI-driven insights entirely. This article shares my proven approach to integrating AI into your validation workflow, including practical frameworks, real-life case studies, and common pitfalls to avoid.

We’ll explore what product-market fit really means in today’s AI-first business landscape and how to implement these technologies without losing the human touch.

Whether you’re just starting or looking to refine your process, understanding how AI can help you confidently validate your product’s fit can unlock faster growth and better decision-making.

TL;DR

Use AI as a co‑thinking partner to accelerate PMF validation: define a precise ICP, structure interviews, surveys, and usage data, then have AI synthesize patterns, flag leading churn indicators, and suggest hypotheses. Iterate with controlled experiments and human judgment—AI surfaces signals, founders decide trade‑offs and act quickly, measurably, and repeatably.

KEY TAKEAWAYS

- Codify a specific ICP and tag every data source by that profile before analysis.

- Feed structured transcripts, surveys, and product metrics into AI and run co‑thinking sessions to surface leading indicators and testable hypotheses.

- Launch rapid, controlled experiments (cohort flags, pricing or onboarding variants) and retrain models with fresh outcomes to close the loop.

Why is Tackling Product Market Fit with AI Smart?

Validating product-market fit with AI isn’t about running faster searches or generating more survey questions. It’s about treating AI as a thinking partner that helps you surface what customers actually want, not just what they say they want. When you approach it that way, the whole process gets sharper, faster, and a lot less guesswork-dependent.

Here’s what I’ve learned working with B2B SaaS founders on AI-driven validation:

- AI speeds up the signal, but you still have to know what you’re listening for

The raw capability is there. AI can analyze customer interview transcripts, cluster feedback themes, and identify patterns across hundreds of data points in minutes. What it can’t do is decide which signals matter for your specific market. That judgment still lives with you. - Shallow prompts produce shallow answers

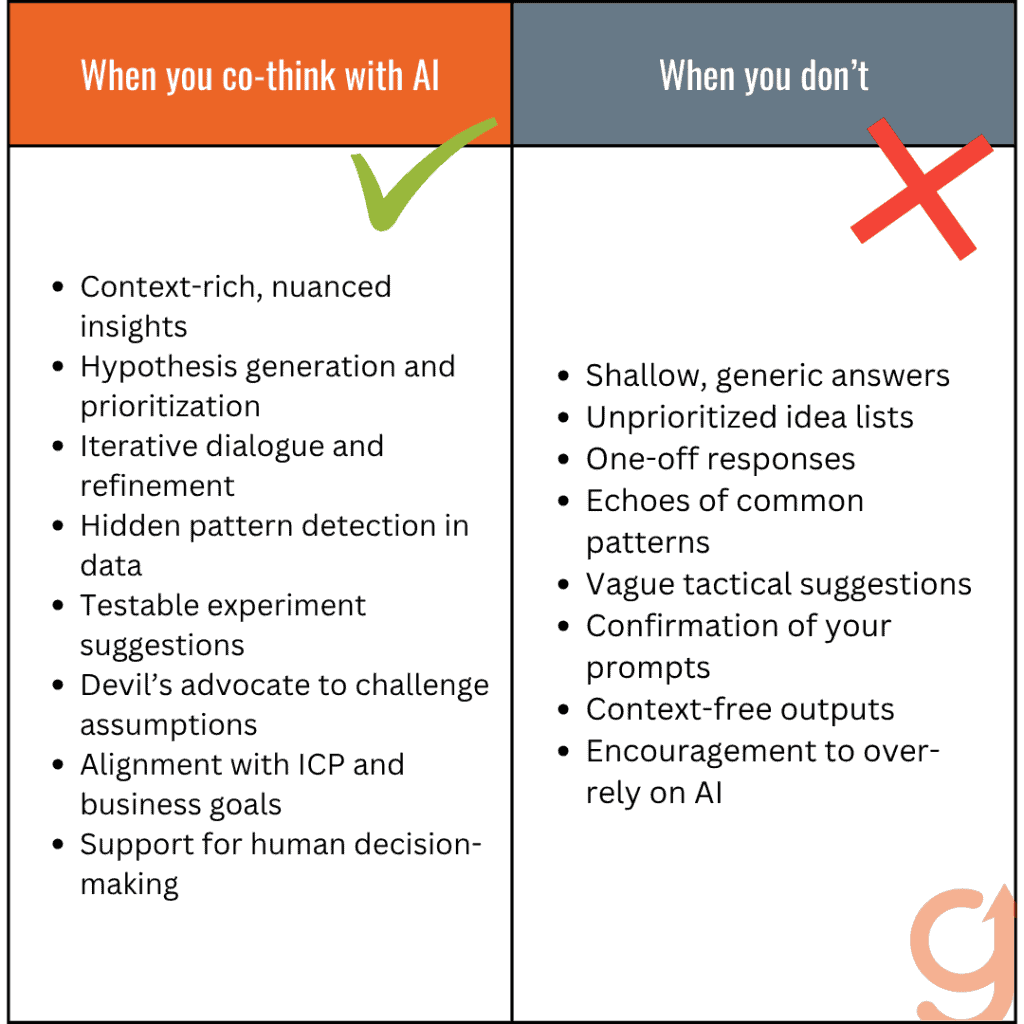

This is the mistake I see most often. A founder asks AI a generic question, gets a generic answer, and concludes that AI isn’t useful for validation. The real problem isn’t the tool. It’s the approach. When I helped a friend who was getting rigid, unusable AI output, the fix wasn’t a better prompt template. It was capturing the full context of his goals and frustrations first, then feeding that to the model. The output changed completely. - The Co-thinking with AI framework changes what’s possible

Instead of treating AI like a search engine or a content machine, you bring it into the reasoning process. You share context. You push back on its outputs. You ask it to challenge your assumptions about your Ideal Client Profile. That iterative dialogue is where the real validation insight comes from. - Your Ideal Client Profile (ICP) has to anchor everything

Without a clear ICP, AI validation becomes noise. You end up with insights about everyone, which means insights about no one. The ICP gives AI the filter it needs to make its analysis actually useful. - Real-world examples matter more than theoretical frameworks

The founders I’ve seen get the most from AI validation are the ones who treat it as an active partner in specific conversations, not a background tool they query occasionally.

The bottom line: AI makes product-market fit validation faster and more rigorous, but only when you bring the right intention to the process. The rest of this article breaks down exactly how to do that.

Does Validating Product-Market Fit with AI Matter?

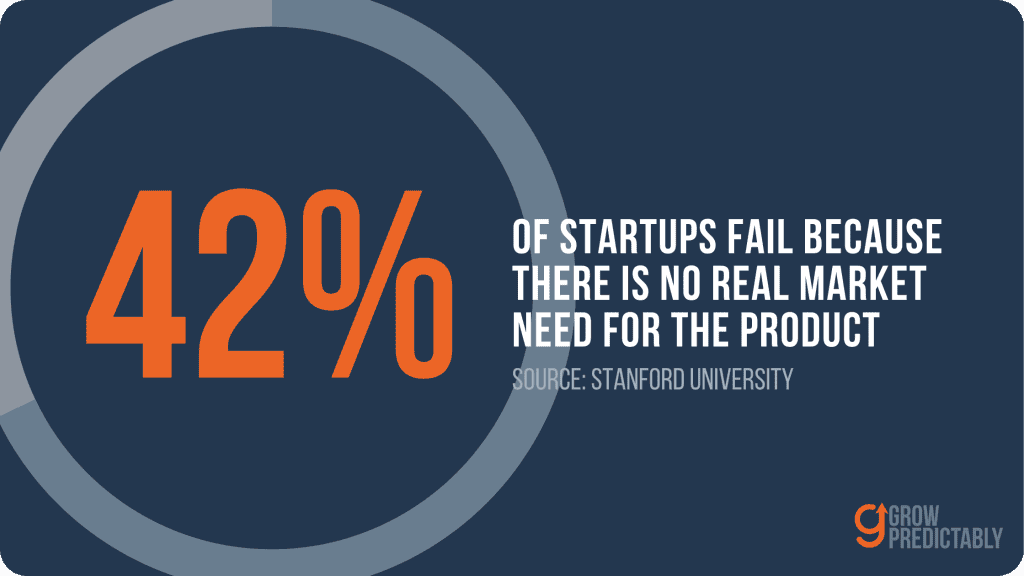

Product-market fit is the difference between a SaaS business that grows and one that quietly dies. For founders, it’s not just a milestone — it’s the question that should drive every decision from day one. And yet, most founders I talk to are validating fit the hard way: slow surveys, expensive customer interviews, and gut instincts dressed up as strategy.

AI changes that equation, but only if you treat it as a thinking partner rather than a search engine.

What Product-Market Fit Means for SaaS Founders

Product-market fit is your North Star. It’s the moment when your product stops being something you built and starts being something your customers can’t live without. For B2B SaaS founders specifically, that means a very specific kind of customer — your Ideal Client Profile — is getting real, repeatable value from what you’ve built.

But here’s what most people get wrong: they treat PMF like a finish line. It’s not. It’s a signal. A signal that tells you the promise you made in your marketing actually matches the experience customers have after they sign up.

The life of any startup can be divided into two parts — before product/market fit and after product/market fit.

Marc Andreessen, co-founder of netscape

I call that the promise-to-experience handoff. When it works, you get retention, referrals, and expansion revenue. When it doesn’t, you get a leaky bucket — pouring leads into a funnel that’s draining from the bottom.

The founders who nail PMF fastest are the ones who stay obsessed with that handoff. They don’t just ask “are people buying?” They ask “are people staying, growing, and telling others?”

The Traditional Challenges in Validation

Validating PMF without AI is slow, expensive, and often misleading. Most founders rely on a handful of customer interviews, a survey with 40 responses, and whatever pattern-matching they can do in their heads. That’s not diagnosis — that’s guesswork with a professional veneer.

The real problem is signal versus noise. You get one customer who loves the product and three who churned quietly. You don’t know if the churn was a pricing issue, an onboarding failure, or a fundamental mismatch with your ICP. So you spin your wheels on symptoms instead of root causes.

I’ve seen B2B SaaS teams spend six months “validating” a market by talking to prospects who seemed enthusiastic but never converted. The feedback sounded good. The adoption never came. The bottleneck wasn’t the product — it was that they were talking to the wrong people entirely. Their Ideal Client Profile was blurry, and every conversation reinforced the wrong assumptions.

Traditional validation also doesn’t scale. You can only run so many interviews. You can only synthesize so much qualitative data manually before the insights start blending together into vague generalities.

The Promise of AI in This Journey

Here’s what shifted for me starting around 2021: I stopped using AI to get answers and started using it to think better. That’s a different relationship entirely.

I remember a friend who was getting rigid, unusable output from AI despite carefully structured prompts. The code wasn’t working. The ideas felt generic. He was frustrated. So I sat down with him, captured the full context of what he was actually trying to build — his goals, his constraints, his frustrations — transcribed the whole conversation, and fed that to GPT.

The output was completely different. Better code. More creative solutions. The missing ingredient wasn’t a better prompt. It was intention and context.

That experience crystallized something I now call co-thinking with AI. It’s the idea that AI’s real value isn’t in generating answers — it’s in helping you ask sharper questions, surface patterns you’d miss, and pressure-test assumptions before they cost you six months of runway.

For SaaS founders validating PMF, this matters enormously. AI can help you synthesize customer interview transcripts at scale, identify clustering patterns in churn data, and stress-test whether your ICP definition actually holds up against real market signals.

It won’t replace the judgment call you have to make as a founder. But it will make that judgment call a lot more informed.

What Is Product-Market Fit and How Can AI Help Me Validate It?

Product-market fit means your product solves a problem so well that customers can’t imagine going back to life without it. For SaaS founders, it’s the difference between a product people tolerate and one they actively pull into their workflows.

AI accelerates the validation process by surfacing patterns in customer behavior, feedback, and engagement data that would take weeks to find manually — letting you act on real signals instead of gut instinct.

Defining Product-Market Fit from My Founder Lens

Most founders I talk to treat product-market fit like a finish line. Get there, plant the flag, move on. That’s the wrong mental model.

I think of it more like a calibration process. You’re constantly asking: does the problem I’m solving actually matter enough to the people I’m solving it for? And are those the right people?

This is where the Ideal Client Profile (ICP) framework becomes essential. Before you can validate fit, you need clarity on who you’re fitting for. I’ve watched founders collect a thousand data points on the wrong customers and wonder why nothing clicked. The data wasn’t the problem. The targeting was.

When I work through ICP definition, I’m not just listing firmographics. I’m asking: what does transformation look like for this person? What are they losing by not having a solution? What does success feel like on a Tuesday afternoon when everything’s working? That specificity changes what you measure and what you build.

Product-market fit isn’t a number. It’s a pattern of behavior — retention curves that flatten instead of drop, unprompted referrals, customers who get upset when you talk about removing a feature. Those are the signals that matter.

How AI Amplifies Customer Feedback and Market Signals

Most founders are drowning in feedback but starving for insight. You’ve got support tickets, NPS responses, sales call recordings, churn surveys, onboarding drop-off data. It’s all there. The problem is synthesizing it fast enough to actually act on it.

I’ve been using AI as what I call a co-thinking partner in this process. Not as a tool that spits out answers, but as a collaborator that helps me ask better questions about what I’m seeing.

The shift happened when I stopped prompting AI with tasks and started sharing full context. I’d feed in a batch of customer interviews, churn reasons, and behavioral data, then have a conversation with the AI about what patterns it noticed and why they might be happening. The output was completely different from what I got when I just asked “summarize this feedback.”

As Ethan Mollick, Wharton professor and author of Co-Intelligence (2024), explains, AI works best when humans treat it as a thought partner rather than a search engine — the quality of the collaboration depends on the quality of the context you bring to it.

In practice, I use AI to cluster qualitative feedback into themes without losing the nuance of individual voices, identify leading indicators of churn before they show up in revenue numbers, cross-reference engagement patterns with ICP attributes, and flag anomalies in onboarding behavior that correlate with long-term retention.

The goal isn’t more data. It’s cutting through to the signal faster. Vanity metrics — page views, sign-ups, demo requests — can make a leaky product look healthy. What I care about is whether the right customers are getting real value, coming back, and telling others.

How SaaS Founders Validate Product-Market-Fit with AI

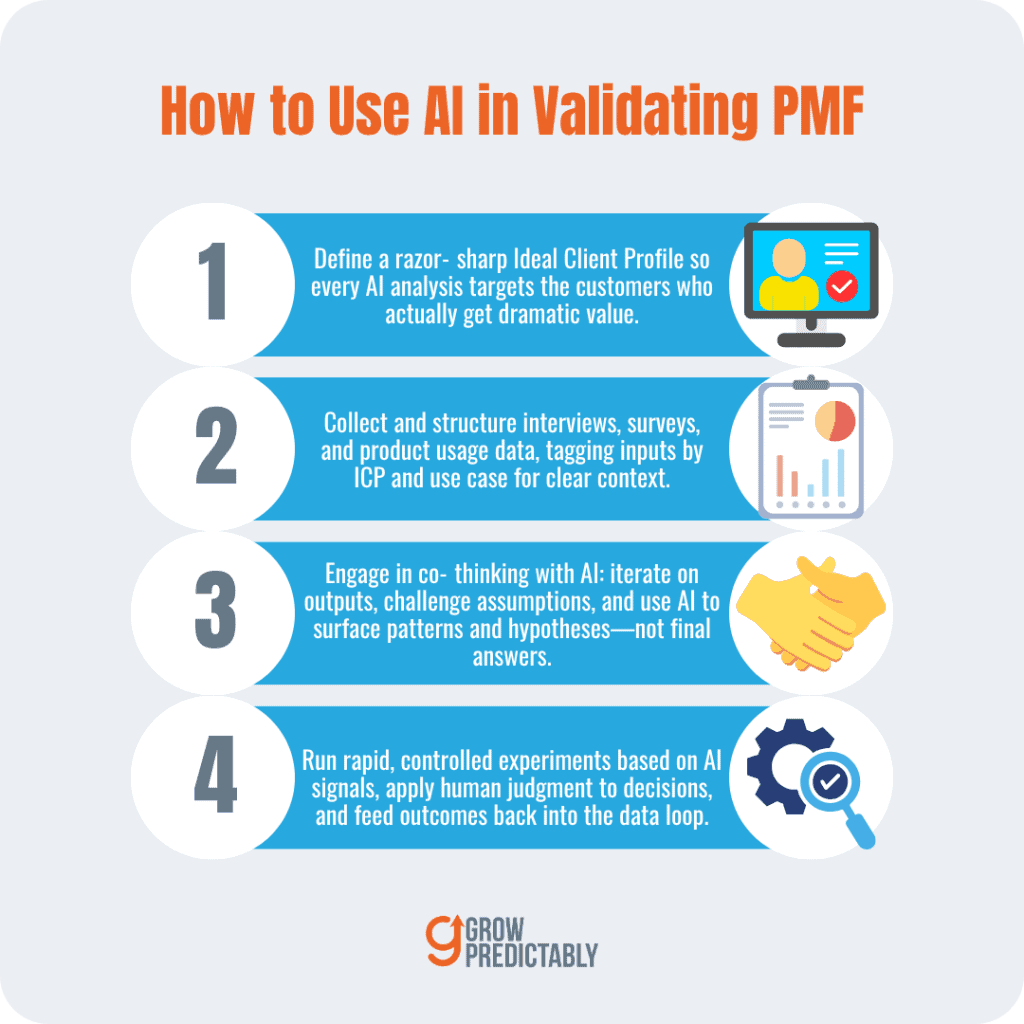

Implementing AI in your product-market fit validation process works best as a four-step cycle: define a precise Ideal Client Profile, collect structured customer data, engage AI as a thinking partner to interpret what you find, and iterate based on what AI surfaces combined with your own strategic judgment.

Skip any of these steps and you’re not validating — you’re guessing with extra technology.

Step 1: Define Your Ideal Client Profile (ICP) Precisely

Step 1: Define Your Ideal Client Profile (ICP) Precisely

Before you feed a single data point into an AI tool, you need clarity on who you’re actually validating for. This sounds obvious. Most founders skip it anyway.

I’ve seen this pattern repeatedly with B2B SaaS teams: they collect mountains of user feedback, run it through AI analysis, and get back noise. The problem isn’t the AI. It’s that they asked it to analyze signals from five completely different customer types at once. The output is a blur.

A sharp Ideal Client Profile fixes this. Not a vague persona with a stock photo and a job title. Specific attributes — company size, growth stage, the exact problem they’re losing sleep over, what they’ve already tried, why those attempts failed. The more precise your ICP, the more focused your AI analysis becomes.

Start by asking: who are the customers where your product created a genuinely dramatic improvement? Not satisfied customers. Dramatically better off customers. Build your ICP around those people. That’s your signal.

How to build and use an ICP you can actually feed to AI:

- Start with a one‑page ICP template. Include firmographics (company size, ARR, industry), buyer role and persona (title, decision power, day-to-day pain), technical context (existing stack, integrations), triggering events (what prompts a purchase), and the measurable value they gain (time saved, revenue uplift, churn reduction).

- Score historic customers. Pick the top 10 customers by lifetime value or expansion and score them against your template. Look for common attributes — those are your primary ICP signals.

- Choose a single anchor metric. Pick one value metric (e.g., 30‑day activated retention, time‑to‑first‑value) that demonstrated the “dramatic improvement.” This becomes the KPI for ICP validation.

- Create an ICP hierarchy. If you see two repeating patterns, make a Primary ICP and a Secondary ICP. Never analyze them together; tag data separately.

- Operationalize before analysis. Tag every interview, survey response, and telemetry row with ICP flags (Primary, Secondary, Other), onboarding path, and customer vintage. AI analysis on tagged data yields diagnosis, not noise.

Quick practical example:

- Pick 8 top customers with highest expansion rates.

- Fill the ICP template for each. Note recurring company size = 50–200 employees, use of Salesforce, and a weekly reporting pain.

- Define anchor metric = % of users completing value event within 7 days.

- Tag all interview transcripts and product events with that ICP flag.

- Feed the tagged dataset to AI and ask: “What caused the 7‑day value event to happen for Primary ICP vs. Others?”

- Run a targeted onboarding test for the Primary ICP cohort, measure the anchor metric, and feed results back to retrain your model.

Do this once and you turn AI from a noisy summarizer into a precision tool that surfaces the right hypotheses about the customers who actually get dramatically better off.

Step 2: Collect and Structure Customer Data for AI Analysis

AI can’t read between the lines of bad data. How you collect and structure your inputs matters enormously.

I rely on three sources:

- Structured customer interviews

- Targeted surveys

- Product usage data

Each one tells a different part of the story. Interviews surface the emotional context — why someone cared enough to try your product. Surveys give you patterns across a larger group. Usage data shows you what people actually do versus what they say they do.

The gap between those last two is often where the real insight lives.

When I prep data for AI analysis, I don’t just dump raw transcripts into a prompt. I organize it. I tag responses by customer segment, by the problem they came in with, by how long they’ve been a customer. Context is everything.

An AI tool analyzing 50 interview transcripts with zero structure will give you a summary. The same transcripts, organized by ICP segment and tagged by use case, will give you a diagnosis.

Step 3: Engage in Co-thinking with AI to Interpret Results

This is where most founders either over‑trust AI or completely dismiss it. Both are mistakes.

I had a friend who was building a development tool and kept getting rigid, unusable output from AI — even with well‑structured prompts. The issue wasn’t the prompt. It was that he was treating AI like a vending machine: put in a request, get out an answer.

When I sat down with him, captured the full context of what he was actually trying to solve, transcribed that conversation, and fed that to the model — everything changed. Better analysis. More creative options. Actual insight.

That experience crystallized what I now call Co‑thinking with AI. You’re not prompting better. You’re treating AI as a thinking partner you’re actively working with, not a tool you’re extracting answers from. The distinction sounds subtle. The results are not.

In practice, I don’t accept the first output. I push back. I ask the AI to argue the opposite position. I ask it what signals it might be missing. I ask it to identify which customer segments are behaving differently and why. Passive automation gives you a report. Co‑thinking gives you understanding.

How co‑thinking looked for the dev‑tool founder:

| Step | Action |

|---|---|

| 1. Capture full context | Record a 30‑minute walkthrough: product goals, target user (backend engineers at Series A–B), onboarding flow, KPIs (7‑day activation), and suspected pain points. |

| 2. Prepare artifacts | Bundle 20 support tickets, 10 interview transcripts, CI/CD telemetry, and the onboarding flow map. Tag each item by user segment and onboarding path. |

| 3. Seed the model | Provide the narrative and constraints to the model with a prompt: “List top 5 hypotheses explaining low 7‑day activation for Engineer ICP and rank by likelihood and ease of test.” |

| 4. Push, probe & falsify | For each hypothesis, ask AI to argue the opposite and specify what telemetry signal would falsify it. Use those signals to design null tests. |

| 5. Convert outputs to experiments | Turn AI suggestions into three targeted tests (sample project in onboarding, SDK default config change, contextual in‑app hints), assign cohorts, and instrument the anchor metric. |

| 6. Measure, iterate & retrain | Feed results back into the dataset, ask AI to analyze pre/post telemetry, revise hypotheses, and rerun tests. Activation improved 18% for the Primary ICP in two weeks. |

Step 4: Iterate Based on AI-Driven Insights and Human Judgment

AI finds patterns. You decide what those patterns mean for your business.

AI might surface that a specific customer segment has dramatically higher engagement with one feature. That’s a signal. But whether that signal means you should double down on that segment, rebuild your onboarding around that feature, or rethink your pricing — that’s a strategic call. AI doesn’t make that call. You do.

Validation is never a one-time event. I treat it as a loop. AI uncovers something interesting. I form a hypothesis. I design a quick test — a conversation, a pricing experiment, a feature flag for a specific cohort. I collect new data. I bring it back to AI for another round of analysis. Each cycle sharpens the picture.

The founders who get stuck in PMF validation are usually waiting for a definitive answer that never comes. The ones who make progress treat every round of AI analysis as a slightly better map, not a final destination.

What Are Common Pitfalls SaaS Founders Face When Using AI for Validation?

The biggest mistakes SaaS founders make with AI validation aren’t technical — they’re philosophical. Founders treat AI like a magic answer machine, feed it shallow prompts, ignore the human context behind customer behavior, and then stop validating once they hit early traction.

Each of these traps can send you confidently in the wrong direction, which is worse than being uncertain in the right one.

Mistaking AI as a Magic Wand

I see this constantly. A founder gets excited about AI, runs a few prompts about their target market, gets back a clean, confident-sounding summary, and takes it as gospel. The problem isn’t the AI. It’s the expectation.

AI doesn’t know your customers. It knows patterns from text that existed before your product did. When I started using AI more seriously in my own work, I had to fight the urge to treat every output as validated insight. The outputs felt authoritative. But authority and accuracy aren’t the same thing.

The shift that helped me most was moving from “what does AI say?” to “what can AI help me think through?” You’re not outsourcing your judgment. You’re sharpening it through dialogue.

As Ethan Mollick explains in Co-Intelligence (2024): “The key error people make is treating AI as an oracle rather than a collaborator. Oracles give answers. Collaborators help you ask better questions.”

Relying on Shallow or Generic AI Prompts

Poor input produces misleading output. I learned this watching a friend struggle with a development project. He had structured prompts, clear requirements

⮞ How does co-thinking with AI differ from traditional validation methods?

Co-thinking with AI is a collaborative, iterative process where the founder and AI work together to generate hypotheses, run fast experiments, and synthesize signals. Unlike traditional validation—where you run discrete research phases (surveys, manual interviews, and A/B tests) and then wait for results—AI lets you explore many variations quickly, surface hidden patterns, and prioritize which experiments to run next.

AI accelerates hypothesis generation by suggesting user segments, messaging angles, and metric thresholds based on historical and real‑time data. It automates synthesis of qualitative feedback so you can spot friction points faster. But it’s not a replacement for judgment: you still need to design experiments, choose causal tests, and interpret trade-offs.

In practice, co-thinking shortens the validation loop, increases the hypothesis space you can test, and makes continuous learning operational. It also demands better inputs (clean data, clear metrics) and human governance to avoid overfitting to noisy signals or optimizing for vanity metrics instead of long-term retention and value delivery.

⮞ What common mistakes should SaaS founders avoid when using AI for validation?

AI speeds things up, but founders often repeat the same strategic errors more quickly. Watch for these traps and how to avoid them.

• Treating AI as an oracle. Relying on model outputs without designing experiments leads to false confidence. Use AI to generate hypotheses, then validate with randomized or real-world tests.

• Using poor or biased data. Garbage in, garbage out. Clean, representative data and clear labeling matter more than model complexity.

• Chasing surface metrics. Optimizing for clicks or signups can hide poor retention. Focus on value metrics (activation, retention, expansion).

• Overfitting to early adopters. Models trained on small niche cohorts may mislead broader GTM decisions. Validate across segments.

• Ignoring causal inference. Correlation from AI suggestions isn’t causation. Run controlled experiments or instrumental-variable analyses where possible.

• Skipping human-in-the-loop checks. Customer interviews and expert review should verify AI insights before major pivots.

• No feedback loop. If you don’t continually retrain models with fresh outcomes, insights become stale. Automate periodic re-evaluation.

⮞ Is product-market fit a one-time achievement or an ongoing process with AI?

Product-market fit is an ongoing process, and AI makes that continuous work more practical and measurable. Markets evolve, competitors move, and customer needs shift, so the “fit” you find today can drift tomorrow. With AI, you can instrument leading indicators—cohort retention, activation velocity, feature usage patterns—and get real-time alerts when signals degrade.

AI helps you run many micro-experiments, personalize onboarding, and simulate pricing or packaging changes before broad rollout. But continuous validation still requires human decisions about which trade-offs to accept, which segments to double down on, and when to pivot or expand.

Think of AI as a real-time co-pilot that monitors health metrics, proposes experiments, and flags anomalous trends, while you set the strategic thresholds and act on insights. In short: PMF isn’t a trophy; it’s a maintenance plan you can scale with AI.

⮞ What role does the Ideal Client Profile play in AI validation processes?

The ICP is the anchor for every AI-driven validation effort. Start by codifying your ICP attributes clearly. Then use them to:

• Train models: Filter and weight training data so AI learns from the right cohort signals.

• Segment experiments: Run targeted A/B tests and cohort analyses aligned to ICP segments for cleaner insights.

• Guide prompts and scenario design: Create synthetic personas and ask AI to simulate their jobs-to-be-done and objections.

• Prioritize features and hypotheses: Let ICP-aligned value metrics determine which experiments to fund.

• Personalize messaging: Use ICP traits to generate landing pages, onboarding flows, and trial prompts that resonate.

• Evaluate outcomes: Measure success against ICP-specific KPIs (time-to-value, retention for that profile) rather than generic metrics.

• Align GTM: Ensure sales, marketing, and product experiments are optimized for the same ICP to avoid noisy signals.

When your ICP is explicit and operationalized, AI-driven validation becomes focused, actionable, and far less likely to chase misleading patterns.

Stop Measuring PMF by How Many Demos You Book

Product‑market fit isn’t a trophy — it’s a running system you keep tuning. AI doesn’t replace judgment; it multiplies it. Start with a razor‑sharp Ideal Client Profile, collect structured interviews, surveys, and product telemetry, then use AI as a co‑thinking partner to cluster feedback, surface leading churn signals, and generate testable hypotheses.

Run rapid, controlled experiments targeted to ICP cohorts, measure time‑to‑value and retention, and feed results back to retrain models. Do that repeatedly.

Golden nugget: before any AI analysis, pick one ICP segment and one actionable value metric (e.g., 30‑day retention for activated users) — everything you measure, test, and iterate should map to those two anchors.

If you do this, AI converts noise into a prioritized roadmap, not a report. Your job as founder is to decide which signals matter and move decisively. Start small, instrument ruthlessly, and let data plus judgment guide doubling down; share concise findings across product, sales, and marketing every week so teams learn quickly and prioritize high‑impact bets.

Treat AI as your thinking partner, not your oracle, and make validation a continuous loop. That’s how good products become indispensable.

![7 Best AI Story Generators in 2026 for Your Next Story [Ranked]](https://growpredictably.com/wp-content/uploads/2023/12/AI-Story-Generators--768x432.png)