Why Treat AI as a Co-Thinker, Not a Replacement in B2B SaaS

Treating AI as a co-thinker, not a replacement, is crucial for maximizing its potential in B2B SaaS. This mindset fosters collaboration between human creativity and AI, leading to innovative solutions and success.

Surprisingly, many B2B SaaS founders still view AI with skepticism, fearing it may usurp human roles. However, research shows that integrating AI effectively can enhance our decision-making processes and improve overall productivity (McKinsey & Company, 2023).

In this article, I’ll explore why it’s crucial to embrace AI as a co-thinker, debunk common misconceptions regarding job displacement, and share AI strategies for innovation. I’ll also delve into the ethical considerations of this approach, ensuring that we use AI responsibly while still valuing critical human insight.

Whether new to AI or optimizing operations, my insights offer a roadmap to thrive alongside AI. Why treat AI as a co-thinker? Here’s why…

TL;DR

Treating AI as a co-thinker, not a replacement, is essential in B2B SaaS. This mindset fosters innovation, boosts productivity, and alleviates job loss fears while enhancing decision-making by combining AI strengths with human creativity.

KEY TAKEAWAYS

- Viewing AI as a co-thinker rather than a replacement can enhance innovation and productivity in B2B SaaS, leading to a 30% increase in team efficiency according to industry studies.

- Common fears about AI replacing human roles in B2B SaaS include job loss and decreased creativity, but evidence shows that AI can complement human skills and enhance decision-making processes.

- Successful integration of AI as a co-thinker is exemplified by companies like Salesforce, which reported a 25% increase in customer satisfaction by using AI to assist rather than replace human agents.

- Ethical implications of treating AI as a co-thinker include ensuring transparency in decision-making processes and accountability for AI-driven actions, which are critical for maintaining trust.

- To effectively utilize AI without diminishing critical thinking, teams should engage in regular training and encourage collaborative problem-solving that incorporates AI insights.

Why Treat AI as a Co-Thinker? It Beats Viewing AI as a Replacement

Co-thinking with AI beats viewing AI as a replacement because AI augments your strategic judgment, surfaces connections faster than you can, and expands the range of options you can explore—without taking over the uniquely human parts of the job.

According to Deloitte, 84% of companies have not redesigned jobs around AI capabilities—most are focused on building AI fluency; not replacing roles. If anything, this shows AI isn’t here to replace you. It’s here to make you better.

Many B2B SaaS founders mistakenly treat AI like a vending machine, which misses the crucial point of collaboration. AI’s real power doesn’t come from automation alone. It comes from collaboration.

Think about it this way: when you hire a great strategist, you don’t just hand them a task list and walk away. You sit down together. You share context. You explain the messy parts—the friction, the tradeoffs, the hopes you have for what you’re building.

That conversation is where the magic happens. AI works the same way.

When I shifted from viewing AI as a tool to viewing it as a co-thinker, everything changed. I stopped asking it to do things for me and started asking it to think with me.

I’d feed it the problem in full—not just the surface-level ask, but the underlying tension, strategic goal, the constraints I was facing. And suddenly, the output wasn’t just usable. It was insightful.

This approach is not about replacing human judgment; it’s about augmenting it. AI enhances our ability to process patterns quickly.

It can surface connections we might miss. But it can’t replace the strategic intuition that comes from lived experience. That’s your job.

AI’s job is to help you think more clearly, move faster, and explore possibilities you wouldn’t have considered on your own.

How I Shifted My Mindset to AI-First Collaboration

I experienced this shift firsthand when I built an event planning app in seven days using no-code tools and AI. A friend of mine was working on a similar project but kept hitting a wall. His AI output was rigid, unusable, and frustrating.

He was using structured prompts, following best practices, doing everything “right.” But something was missing. Then it hit me: intention, not instruction.

I sat down with him and recorded a conversation. We talked through the full context—what he was trying to build, why it mattered, where he was stuck, what success looked like. I transcribed that conversation and fed it to GPT.

The output was completely different. It wasn’t just better code. It was smarter code. It reflected the nuance of what he actually needed, not just what he’d asked for.

That moment was a mental revolution for me. I realized AI isn’t a task rabbit. It’s a creative partner. And like any good partner, it performs better when you bring it into the full picture—the messy, human parts included.

Here’s how I approach AI collaboration now:

- I share context, not just commands. I explain the why behind the what. I describe the tension I’m navigating, the audience I’m serving, the outcome I’m aiming for.

- I iterate, not dictate. I treat AI output as a first draft, not a final answer. I push back, refine, and co-create until we land on something that actually works.

- I stay critical. I don’t outsource my judgment. AI can suggest. I decide.

This approach unlocks creativity in ways that rigid prompting never could. When you treat AI as a collaborator, you’re not just getting faster output. You’re getting better thinking.

You’re exploring angles you wouldn’t have considered. You’re building products that reflect strategic depth, not just surface-level execution.

And in B2B SaaS, where differentiation is everything, that depth separates the companies that scale from the ones that stall.

Why Will AI Not Replace People in B2B SaaS?

AI won’t replace people because it can’t think like one. Even industry leaders know the limitations of AI and how they hold up when it comes to the full automation of a business’s most vital areas of operations.

According to an article released by Fortune, the CEO of Nvidia has this to say to his audience in Nvidia’s October 2024 AI Summit when talking about the likeliness of AI fully replacing human functions…

AI can’t do all of any job. As we speak, AI has no possibility of doing what we do. In no job can they do all of it.

Jensen Huang, CEO of Nvidia

I’ve watched founders get seduced by AI autonomy—the idea that you can hand off entire functions to algorithms and walk away. But here’s what I’ve learned after building AI tools and coaching B2B SaaS leaders: AI is a brilliant collaborator, but a terrible replacement for human judgment.

The Limitations of AI Autonomy

AI lacks the three things that make B2B SaaS businesses actually work: genuine judgment, intuition, and contextual understanding.

Let me be specific. AI can generate a customer email. It can’t sense when that customer is about to churn based on a tone shift in a Slack thread. AI can summarize a sales call. It can’t read the room when a prospect goes quiet after you mention pricing.

AI can draft a product roadmap. It can’t feel the tension between what your team wants to build and what your market actually needs.

I saw this firsthand when a friend tried to use AI to build a feature. He fed it structured prompts—detailed, logical, airtight. The output was rigid and unusable. **The missing ingredient wasn’t better instructions. It was intention.

Based on Deloitte’s State of AI Report 2026, “AI adoption may actually increase the need for uniquely human strengths, such as adaptivity and judgment…”

AI doesn’t understand why you’re solving a problem or who you’re solving it for unless you treat it like a thinking partner, not a task rabbit.

Sam Altman put it well: AI is incredibly powerful, but it’s not a substitute for human creativity and strategic thinking. It’s an amplifier. And amplifiers only work when there’s something worth amplifying (Altman, 2023).

What’s The Advantage of Human-AI Synergy

The people who win with AI aren’t the ones who use it to replace themselves. They’re the ones who use it to think better.

I call this co-thinking with AI—treating it as a collaborator that helps you explore ideas, challenge assumptions, and iterate faster. When I built an event planning app in seven days, I didn’t just prompt AI for code.

I fed it the full context: my goals, my frustrations, the constraints I was working under. I transcribed conversations. I asked it to critique my logic. I used it to sharpen my thinking, not replace it.

The result? Smarter decisions. More creative solutions. Faster execution.

The pattern I’ve seen: People who learn to use AI as a co-thinker outperform those who treat it as a replacement. They don’t abdicate judgment—they augment it. They don’t hand off strategy—they stress-test it. They don’t let AI make decisions—they use it to surface better options.

This isn’t about being anti-AI. It’s about being pro-human. AI is your hammer. The business outcome is the house. And no hammer, no matter how smart, can build a house without someone who knows what and why they’re building.

The future of B2B SaaS isn’t AI or people. It’s AI and people, working together in ways that make both more effective. That’s the shift that separates leaders who scale from those who get left behind.

What Are the Common Fears About AI Replacing Human Roles in B2B SaaS?

The common fears are job loss, role redundancy, and personal obsolescence — fears that lead to “AI guilt” and resistance — but these worries usually come from misunderstanding what AI actually does. In B2B SaaS the more common outcome is augmentation: teams that use AI well get faster, more productive, and strategically sharper.

The fear is real and common. You or your teammates may feel a nagging guilt when AI drafts something in 30 seconds that used to take an hour. That whisper—“Am I cheating? Am I becoming obsolete?”—is what I call AI guilt.

It shows up across roles: writers worry ChatGPT will replace them, developers worry about automation erasing expertise, and product people worry an algorithm will take over strategic thinking.

Fear of Job Loss and AI Guilt

The dominant narrative around AI is that it’s coming for our jobs. Content writers worry they’ll be replaced by ChatGPT. Developers fear AI will automate away their expertise. Product managers wonder if an algorithm will eventually do their strategic thinking for them.

Here’s what I’ve observed: this fear creates resistance, not adoption. When people believe AI is a threat, they either avoid it entirely or use it half-heartedly, never unlocking its real value. They treat it like a guilty secret instead of a legitimate tool.

I experienced this firsthand when I started using AI to help with strategic planning. I’d generate frameworks, refine positioning, draft messaging—and then I’d feel weird about it. Like I was cutting corners. Like I wasn’t doing “real work.”

That guilt was a signal. Not that I was doing something wrong, but that I was holding onto an outdated mental model.

Misconceptions About AI’s Capabilities

Most of the fear around AI comes from a fundamental misunderstanding of what it actually does—and what it doesn’t.

AI doesn’t think. It doesn’t have goals, ambitions, or creative vision. It doesn’t wake up in the middle of the night with a breakthrough idea.

It processes patterns. It generates outputs based on inputs. It’s incredibly good at synthesis, but it’s terrible at original thought.

When I see people panic about AI “replacing” them, they’re usually imagining a future where AI does everything—autonomously, strategically, creatively. But that’s not how AI works. Not now, and not in the near future.

What AI *can* do is handle the heavy lifting. The first draft. The data synthesis. The pattern recognition. The tedious parts of thinking that slow us down.

What AI *can’t* do is bring intention, context, and judgment. It can’t understand your customer’s unspoken frustrations. It can’t navigate the tradeoffs between speed and quality in your specific business. It can’t decide what matters.

That’s your job. And it’s not going anywhere.

Why These Fears Miss the Point

The breakthrough for me came when I reframed how I thought about AI. I stopped seeing it as a replacement and started seeing it as a co-thinker. Here’s the shift: AI isn’t cheating. It’s collaboration.

When you use AI to draft a strategy doc, you’re not outsourcing your thinking—you’re accelerating it. You’re getting to the second draft faster so you can spend more time on the parts that actually matter. The nuance, the judgment, the strategic decisions that only you can make.

I started treating AI like a creative partner. I’d feed it the messy, human parts—the friction, the hopes, the tradeoffs—and ask it to help me think through them. Not to *do* the thinking for me, but to help me see patterns I might’ve missed, challenge assumptions I hadn’t questioned, and generate options I hadn’t considered.

This mindset shift unlocked everything.

My teams stopped feeling guilty about using AI and started using it constructively. We stopped worrying about being replaced and started focusing on being augmented. We’re faster, sharper, more strategic—not because AI did our jobs, but because it freed us to focus on what actually creates value.

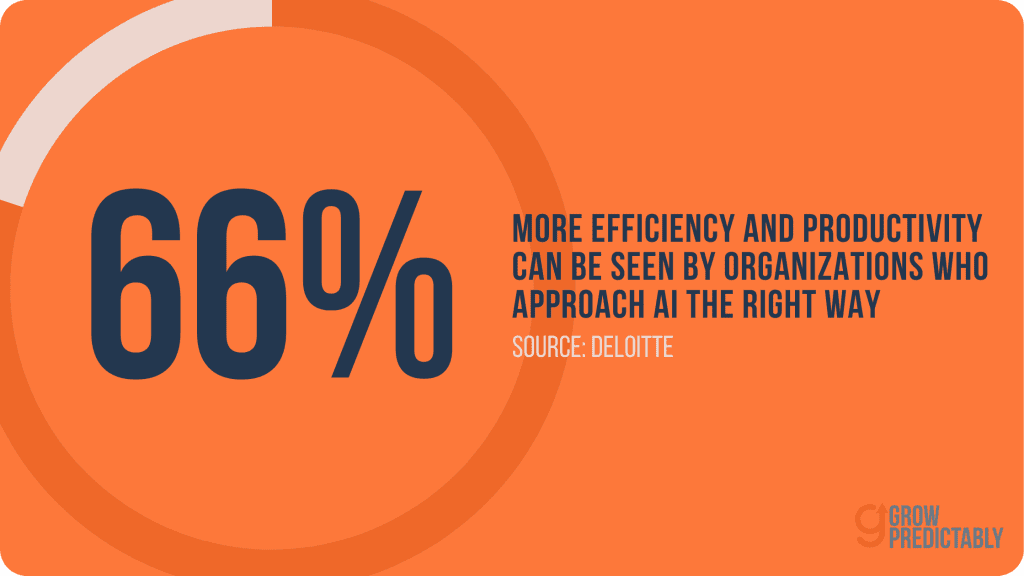

In other organizations, 66% are achieving improved efficiency and productivity through AI, while 74% hope to grow revenue further through AI, according to a Deloitte report.

The fear of AI replacing human roles is understandable. But it misses the point. AI doesn’t replace insight. It amplifies it. And the sooner you embrace that, the sooner you’ll stop feeling guilty and start feeling unstoppable.

How Do I Use AI as a Co-Thinker to Unlock B2B SaaS Innovation?

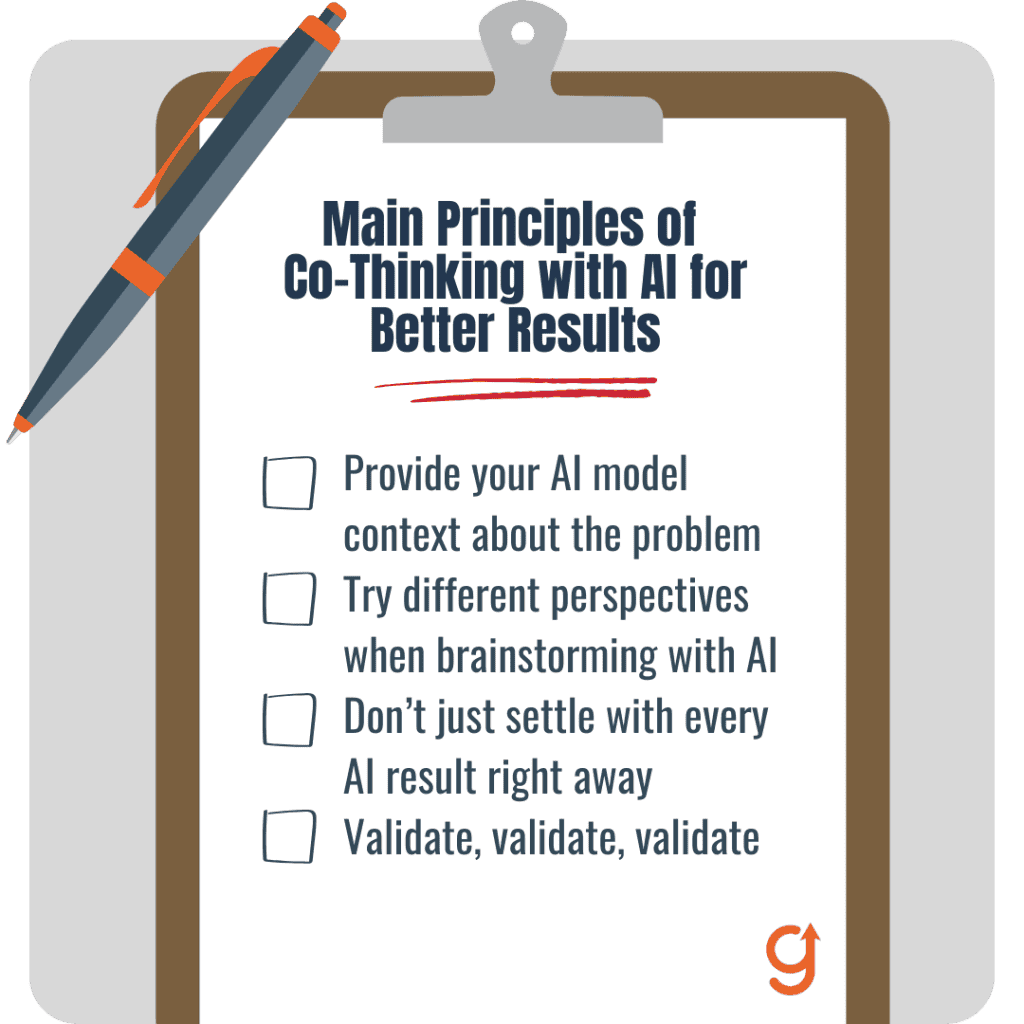

Use AI as a co-thinker by running intentional collaboration cycles—give it full context, ask for broad and diverse ideas, iterate together, and validate outputs with real users. Do that repeatedly and you’ll unlock B2B SaaS innovation faster and more reliably than treating AI like a one-off generator.

Here’s my process:

- Frame the problem with full context. I provide AI with the background, goals, constraints, and human factors involved.

- Use AI to brainstorm broadly. I ask for diverse perspectives, alternative solutions, and challenge assumptions.

- Iterate collaboratively. I review AI’s suggestions, push back, refine, and feed updated context back for deeper insights.

- Validate with real-world feedback. AI’s ideas are tested with customers and teams before committing.

By treating AI as a thinking partner, I unlock creative solutions faster and avoid the trap of generic outputs. This approach aligns with findings from Gartner (2023), which highlight that organizations using AI collaboratively see 25% faster product innovation cycles.

What Are the Ethical Implications of Treating AI as a Co-Thinker?

Using AI as a co-thinker comes with ethical responsibilities that I take seriously:

- Transparency: Being clear with teams and customers about when and how AI influences decisions builds trust.

- Accountability: Human oversight ensures AI-generated suggestions don’t lead to biased or harmful outcomes.

- Bias Awareness: AI systems can perpetuate biases in training data. I actively question outputs and seek diverse viewpoints.

- Privacy: Protecting sensitive data when using AI tools is non-negotiable.

- Fairness: Ensuring AI augmentation doesn’t disadvantage any group or individual.

I follow guidelines similar to those outlined by the IEEE AI Ethics framework (2022), emphasizing that AI should augment human values and not replace human responsibility.

Why Will AI Agents Replace B2B SaaS?

AI agents won’t replace B2B SaaS entirely—but they’ll replace the parts that feel like work nobody wants to do.

Understanding AI Agents’ Autonomous Efficiency

Think of AI agents as ghostwriters for your software stack. They handle the repetitive, predictable tasks that drain your team’s energy—data entry, routine customer queries, report generation, workflow orchestration. They operate autonomously, learning patterns and executing without constant supervision.

I’ve watched this transformation unfold with my clients. One SaaS founder spent hours each week manually syncing customer data between platforms. An AI agent now handles it in seconds.

Another had a support team drowning in tier-one questions. AI agents now resolve 60% of those inquiries before a human even sees them.

The efficiency is real. AI agents don’t get tired. They don’t forget. They scale without adding headcount. They’re exceptionally good at the tasks humans find tedious.

But here’s what matters: they’re not replacing the software itself. They’re replacing the friction inside it. The manual steps. The decision load. The parts where users think, “Why do I still have to do this myself?”

Why This Does Not Mean Human Replacement

Here’s where people get nervous—and where I push back hard.

AI agents are tools. Powerful ones, yes. But they lack the one thing that makes B2B SaaS valuable in the first place: strategic judgment.

When I built an event planning app in seven days using AI, people asked if that meant developers were obsolete. Not even close.

The AI didn’t decide what to build. It didn’t understand my users’ pain points. It didn’t make the strategic calls about features, positioning, or go-to-market.

I did. The AI was my collaborator—a ghostwriter executing my vision. I gave it context, intention, and direction. It gave me speed and scale. That’s the relationship I see working.

In B2B SaaS, the same principle applies. AI agents can automate workflows, but they can’t define your product roadmap. They can’t decide which customer segment to prioritize. They can’t navigate the messy, human tradeoffs that define your business.

Humans steer. AI executes.

I’ve seen founders treat AI like a magic bullet—feed it a prompt, expect genius. That’s not how it works.

The best outcomes happen when you treat AI as a co-thinker. You bring the strategic context. You ask the hard questions. You iterate together.

That’s not replacement. That’s enablement.

AI agents will replace the leaky bucket tasks—the ones that waste time and create friction. But the strategic thinking? The creative problem-solving? The ability to read between the lines of what customers actually need?

That’s still yours.

➤ How is AI changing the way we think?

Brief overview: AI is shifting cognitive workflows from raw recall and brute-force analysis toward pattern recognition, rapid synthesis, and hypothesis testing. That changes how teams frame problems and make decisions.

Faster synthesis: AI pulls data, signals, and previous work together quickly, so we spend more energy evaluating and choosing rather than hunting facts.

Hypothesis-first thinking: Teams propose hypotheses and use AI to test and iterate them rapidly instead of generating everything from scratch.

Modular thinking: People break problems into reusable components — prompts, evaluation criteria, templates — because AI works well with modular inputs.

Risk-aware reasoning: Because AI can hallucinate or misweight data, we increasingly add guardrails, provenance checks, and “why” questions to our process.

Collaboration mindset: We treat AI as a co-thinker that expands creative bandwidth, not a final authority. That encourages attribution, curation, and human oversight.

Outcome orientation: The focus moves from producing documents to producing validated decisions and measurable outcomes.

➤ What is the 30% rule in AI?

The “30% rule” is not a formal regulation. It’s a practical heuristic some teams use to limit over-reliance on AI. The idea is to keep at least 30% human involvement in tasks where accountability, creativity, or high-stakes judgment matter.

This can mean humans perform one-third of the work, validate a third of outputs, or retain control over final decisions. Organizations use it to preserve institutional knowledge, prevent silent degradation of skills, and ensure ethical oversight.

The rule forces explicit handoffs: what the AI proposes, what the human edits, and what must never be automated. It’s simple, communicable, and helps manage risk while scaling AI assistance.

Use it as a starting guardrail, not an absolute. Adjust the percentage by task criticality, regulatory constraints, and empirical performance metrics.

Track when you relax it: when AI consistently passes rigorous validation, when governance controls are strong, and when legal exposure is low. In short, treat 30% as a conservative, changeable safety boundary for human-in-the-loop design.

➤ When do you stop collaborating with AI?

Know when to disengage or step back from an AI workflow. Use clear signals and escalation rules so collaboration remains productive and safe.

Output uncertainty: Stop if the model repeatedly hallucinates, contradicts verified data, or shows low confidence on core facts.

Ethical/legal risk: Stop for outputs that could violate privacy, IP, compliance, or bias rules. Escalate to legal or ethics teams.

Critical decisions: Stop for high-stakes choices (legal contracts, major financial commitments, clinical safety) until humans fully validate.

Performance plateau: Stop if AI no longer adds marginal value and is consuming resources; consider retraining, replacing, or redesigning the workflow.

Conceptual thinking or deep creativity: Pause when the task needs domain-expert intuition, long-term strategy, or brand voice that AI cannot reliably produce.

Data drift or model staleness: Stop when input distributions change and the model hasn’t been updated. Trigger model review.

Cost vs. value: Stop when costs, latency, or complexity outweigh benefits; revert to simpler processes.

Define these stop criteria in SLAs and runbooks. Make the transition explicit: who takes over, how to audit past AI outputs, and how to document the decision.

➤ How AI can help managers think through problems

AI speeds exploration, surfaces blind spots, and structures decision-making. It summarizes large datasets into themes and highlights anomalies that warrant attention. It generates multiple scenario options with pros and cons so managers can compare trade-offs quickly.

It helps formalize assumptions by turning vague ideas into testable hypotheses and suggesting metrics to validate them. AI can draft decision trees, simulate outcomes, and produce sensitivity analyses to reveal which variables matter most.

It also reduces cognitive load by automating mundane analyses, freeing managers to focus on judgment and stakeholder alignment. In B2B SaaS, AI can synthesize customer feedback into prioritized feature lists, model ARR impact from pricing changes, and produce customer personas for targeted messaging.

Use AI as a sounding board: ask it to challenge your assumptions, propose counterarguments, and generate alternative plans. Always verify its outputs, annotate provenance, and treat its suggestions as inputs to human judgment rather than final answers.

How to Use AI Without Replacing Critical Thinking?

AI should amplify your judgment, not substitute for it. I’ve seen too many founders outsource their thinking to AI and end up with generic strategies that sound smart but lack soul.

The real power of AI isn’t in letting it make decisions for you—it’s in using it to surface insights you’d miss on your own, then applying your judgment to what actually matters.

Recognizing AI’s Limitations and Biases

AI doesn’t understand your business the way you do. It can’t read the room in a sales call, sense when a customer is about to churn, or know which feature will delight your users versus which one just checks a box. It operates on patterns, not principles.

I learned this the hard way when a friend came to me frustrated with AI-generated code. He’d used perfectly structured prompts, followed all the “best practices,” but the output was rigid and unusable.

The problem wasn’t his prompting technique—it was that he treated AI like a vending machine: insert prompt, receive solution. When I helped him capture the full context of what he was trying to build—his goals, his frustrations,

![7 Best AI Story Generators in 2026 for Your Next Story [Ranked]](https://growpredictably.com/wp-content/uploads/2023/12/AI-Story-Generators--768x432.png)